Source Culture

Why “where’s your source?” became the worst question in modern discourse

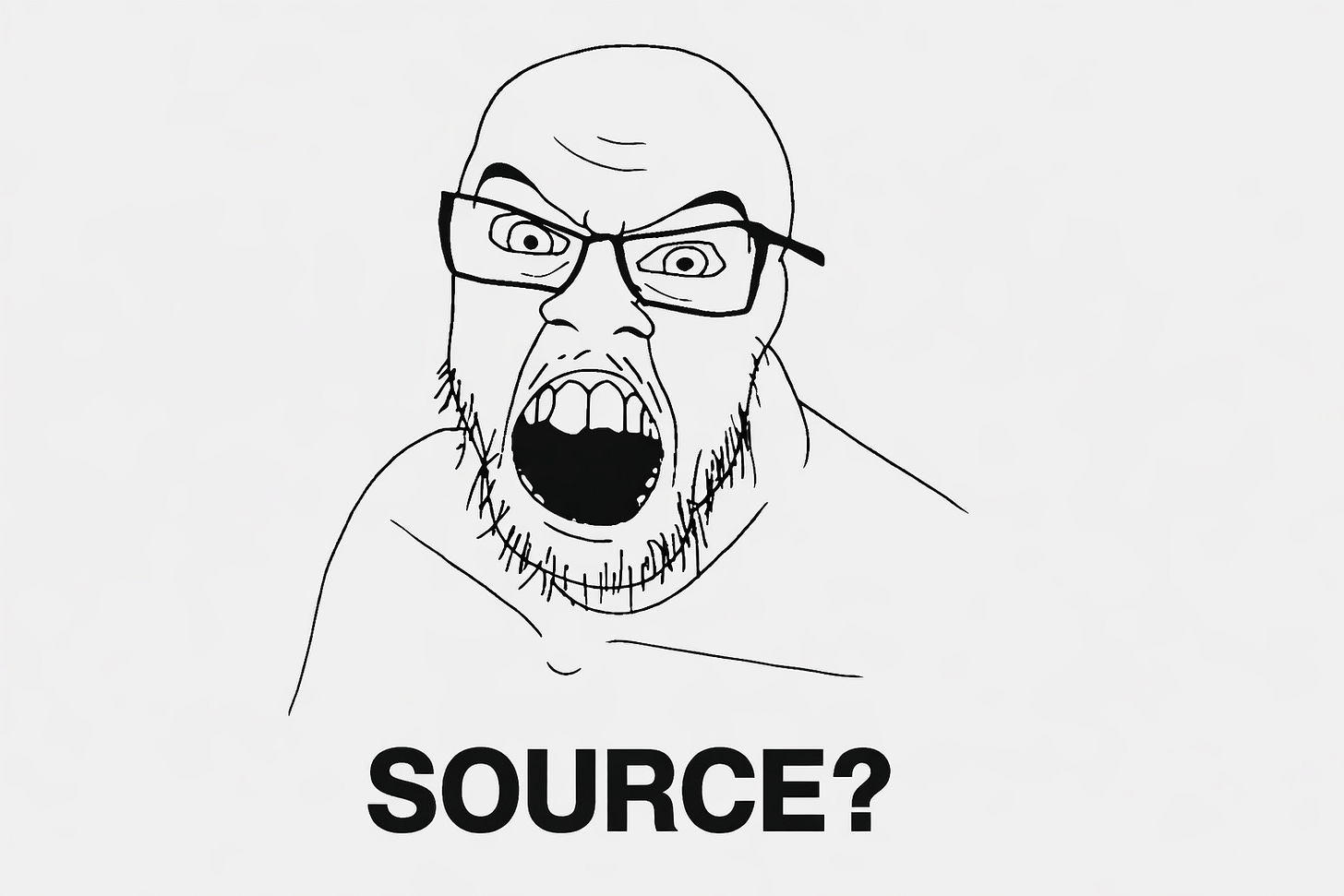

Yep, you've heard that a million times.

There’s a reflex in almost every online debate — and increasingly in real life — that goes something like this: you make a point, someone disagrees, and instead of engaging with the idea, they ask, “Where’s your source?”

Not “why do you think that?” Not “walk me through your reasoning.” Just: produce a hyperlink, or your point is invalid.

I call this Source Culture — the collective dependency on empirical citation as the sole arbiter of whether an opinion is allowed to exist. And it’s quietly destroying our ability to think.

Reddit Broke Our Brains

A lot of this traces back to internet debate culture, and Reddit sits at the epicentre. Reddit trained an entire generation to believe that winning an argument means linking a study, and losing one means you didn’t. It doesn’t matter if the study is a single survey of 200 undergraduates at one American university. It has a DOI. You don’t. You lose.

This has created a bizarre intellectual landscape where people hold strong opinions they cannot actually defend through reasoning — only through delegation. They don’t understand why something is true. They just know someone with credentials said it, and that’s enough.

This Is Mostly a Left-Wing Problem

Thiis is disproportionately a liberal and left-wing phenomenon. The modern left has built an intellectual identity around being “the side of science” and “evidence-based policy,” which sounds great in theory. In practice, it’s produced a culture where citing a source is confused with understanding a topic, and where the absence of a citation is treated as the absence of truth.

The right has its own epistemic problems, some schizos here and there, faith-based reasoning taken too far, outright denial. I’m not pretending otherwise. But the left’s version is more insidious because it looks like rigour. It wears the costume of critical thinking while often being its opposite.

Evidence Can Come From Rationale

Here’s something that shouldn’t be controversial but somehow is: rational deduction is a form of evidence. Philosophy understood this for millennia before the randomised controlled trial existed. You can observe premises, apply logic, and arrive at a sound conclusion without a single p-value.

If I tell you that incentivising something produces more of it, I don’t need a study. That’s a deductive principle. If you demand a source for it, you’re not being rigorous — you’re being intellectually lazy in a way that masquerades as the opposite.

The Data Doesn’t Exist

Here’s what I’ve learned across years as a system architect with a background in data science: the data simply does not exist to empirically validate 99.9% of the world’s ideas and arguments.

People imagine there’s a vast library of clean, comprehensive datasets out there confirming or denying every claim. There isn’t. Most of human experience, social dynamics, cultural phenomena, and behavioural nuance has never been captured in a structured, queryable format. It never will be. The things people argue about most passionately — politics, relationships, culture, human nature — are exactly the things least amenable to controlled study.

So when someone says “there’s no evidence for that,” what they often really mean is “nobody has run a formal study on that.” And those are two wildly different statements.

Even When Data Exists, You Shouldn’t Blindly Trust It

Let’s say the study does exist. Great. Now ask yourself: Who funded it? What was the sample? How were participants selected? What was the methodology? Were there confounding variables? Has it been replicated? What was the effect size versus the p-value? Was the conclusion in the abstract actually supported by the data in the paper?

If you’ve spent any time in data science, you know the answer to most of these is “it’s complicated at best and misleading at worst.” There is no perfect study. There is no unbiased dataset. Collection methods are flawed. Incentive structures corrupt. Publication bias filters what you even get to see. Replication crises are ongoing across psychology, medicine, and social science.

Empiricism isn’t a magic wand. It’s a tool — a good one — but treating it as infallible is its own kind of faith.

Your Gut Isn’t Nothing

Source Culture has taught people to distrust their own pattern recognition, and that’s a mistake. If you’ve lived in the world, observed outcomes, and developed intuitions that consistently prove accurate — that means something. It doesn’t mean you’re always right. But it doesn’t mean you’re always wrong just because you can’t produce a citation.

Humans are, in fact, remarkably good at pattern recognition. It’s arguably what we’re best at. Dismissing all of it because it hasn’t been formalised into a paper is absurd.

You Are the Model

Here’s where my data science background actually becomes useful as an analogy. In machine learning, a multi-layer perceptron — a type of neural network — can learn to make accurate predictions from remarkably small amounts of data. Reinforcement learning agents can develop sophisticated strategies through nothing but repeated interaction with an environment, no labelled dataset required. They observe, they adjust, they get better.

Your brain is doing the same thing. Every social interaction, every observed outcome, every prediction you’ve made and gotten right or wrong — that’s training data. Your intuitions are the weights in your personal neural network, refined over years of real-world exposure.

If you’ve been predicting outcomes correctly and consistently, you are a trained model with demonstrated accuracy. The fact that no formal study validates your predictive ability doesn’t make it less real. It just means nobody’s published your weights.

The Stereotype Example

I’ll use an example to prove the point.

The stereotype that women are worse drivers. Does a rigorous, perfectly controlled, globally representative study exist that settles this definitively? I genuinely don’t know. And if it did exist, I’d have serious questions about its methodology and how “worse” was operationalised.

But here’s what I do know: if I’m driving and I see a car do something erratic — swerve without indicating, brake inexplicably, park at an angle that defies spatial reasoning — and I glance over at the driver, I can predict the gender with roughly 80-90% accuracy. That’s not a study. That’s a trained model running inference on real-world data I’ve collected involuntarily over years of driving.

Source Culture says I’m not allowed to know this — or at least, not allowed to say it — because I can’t link a peer-reviewed paper. But my prediction accuracy is my evidence. The model works. The absence of a formal citation doesn’t change that.

Think Again

None of this is an argument against evidence, science, or data. I’ve built my career on data. I believe in it. But I believe in it honestly, which means acknowledging its limits, its gaps, and the vast territory of human knowledge that it hasn’t — and may never — reach.

Source Culture doesn’t make people smarter. It makes them dependent. It outsources thinking to institutions and papers while atrophying the individual’s ability to reason, observe, and trust their own cognition.

The next time someone asks “where’s your source?”, try responding with your actual reasoning. If they can’t engage with that, the problem isn’t your lack of citations.

It’s their lack of thought.

You have an interesting analytic background to speak on this. I have definitely noticed this as well and it's been a pet peeve of mine for decades. I wrote an article about a month ago, about why the ancient Greeks were not gay, and I make a mini argument similar to what you're saying here. Before I cite my sources, I talk about how I came to this conclusion through reasoning and intuition, which I think is almost more valuable than the sources.

The need for sources seems to be directly linked to most people's complete abnegation of personal responsibility. They are completely incapable of forming their own thoughts or opinions on almost anything such as faith, history, current events, health. When I engage with these people, I get the feeling they truly don't think they are capable enough to make their own decisions and develop their own thoughts.

Thanks for the article I appreciate your work.

Brother, you're absolutely spot-on. When I was attending university just last decade (seems like so long ago lol) there was a professor whom many held in high esteem because she was French and Oxford-educated. Likewise, many found her attractive for this and she used this to her advantage. Though I was never in one of her classes myself (she was a history professor who taught about European women's studies and the Holohoax™), I had heard via a friend of mine that she was very meticulous about source material and vehemently shot down any dissent. It was very much a class where one was required to regurgitate her specific material and any deviation resulted in failure and chastisement. Even providing contradictory information that was otherwise irrefutable from a non-approved source went unacknowledged and was dismissed with arrogance. My entire career in academia left me wary of ever pursuing my doctorates in archaeology (to say nothing of my ideology which would be deemed heretical, as you well know) and was inundated with the need to cite source material. Using one's own logic or powers of deduction was simply insufficient. To be perfectly honest, one might envision a blue-haired bespectacled homunculus arrogantly saying "source?" in disagreement with an argument every bit as much as one arguing from the basis of blind faith to stubbornly abjure any logic or reason that comes into conflict with their dogma.